http://www.hyle.org

Copyright © 2008 by HYLE and Joseph E. Earley

How Philosophy of Mind Needs Philosophy of ChemistryJoseph E. Earley, Sr.*

1. Introduction[1]Around the middle of the twentieth century,

physicists established that all interactions they studied involved only

a small number of fundamental types of force, specifically gravity,

electromagnetism, and the two forces (‘strong’ and ‘weak’) that are

dominant within atomic nuclei. Even earlier, biologists had decided

that they needed no additional (e.g., ‘vital’ or ‘mental’)

forces to deal with physiology. David Papineau (2001) reports that

during the 1950s and 1960s the availability of these two lines of

empirically-based evidence convinced the majority of philosophers to

accept ‘physicalism’ – an approach that rejects dualistic theories of

the human mind (or soul) that have been important features of major

religions. (Physicalists occasionally refer to their position as

‘materialism’ but usually avoid that designation and its overtones.)

Physicalists clearly reject the doctrine generally associated with

Descartes that mental abilities of human persons derive from a

non-physical component – a ‘thinking thing’ – but they are less than

clear about what ‘physical’ might mean in this connection. Active

current controversies in philosophy of mind (McLaughlin et al 2007)

center on competing detailed interpretations of what is entailed by a

commitment to physicalism. This paper sketchily summarizes ‘reductive

physicalism’, briefly mentions alternative approaches, and points out

several presuppositions of reductive physicalism that provide

opportunities for philosophy of chemistry to contribute to the

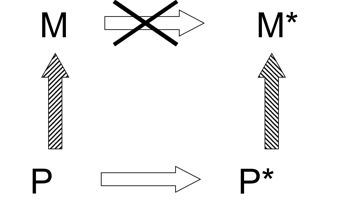

resolution of major problems in philosophy of mind. 2. Reductive PhysicalismJaegwon Kim (e.g., 2003, 2005, 2006, 2007) has developed an influential ‘reductive’ version of physicalism which denies that mental properties (concepts, opinions, beliefs, intentions, decisions, etc.) have causal power. Many, perhaps most, present physicalists favor ‘non-reductive’ versions of physicalism that recognize some causal effectiveness of properties other than microphysical ones. Kim’s system is clearly laid out (Kim 2005) and may represent a nearly limiting position from which most other versions of physicalism deviate, more or less.[2] Kim holds: "The core of contemporary physicalism is the idea that all things that exist in this world are bits of matter and structures aggregated out of bits of matter, all behaving in accordance with laws of physics, and that any phenomenon of the world can be physically explained if it can be explained at all" (Kim 2005, pp. 149-150). Kim reaches the conclusion that mental properties are not causes by using by well-established arguments (Papineau 2001). Mind-body supervenience is a central concept in this discussion. Some time ago Kim wrote: Another dependence relation, orthogonal to causal dependence and equally central to our scheme of things, is mereological dependence (or "mereological supervenience", as it has been called): the properties of a whole, or the fact that a whole instantiates a certain property may depend on the properties and relations had by its parts. Perhaps even the existence of a whole, say a table, depends on the existence of its parts. [Kim 1994, p. 67] More recently, Kim construes mind-body supervenience as: "what happens in our mental life is wholly dependent on, and determined by, what happens with our bodily processes". (Kim 2005, p. 14) That is to say, some underlying physical state P corresponds to each mental property M – and P fully determines M. Consider two mental properties M and M* and their physical ‘subvenience bases’ P and P*. If M* invariably follows M, does that show that M is the cause of M*? Possibly, P could be the sole cause of M and also function as the only cause of P* – and P* in turn could be the unique cause of M*. In that case M would not properly be said to be a ‘cause’ of M*. Scheme 1 represents the situation in which M is not a direct cause of M*.

Scheme 1: Mental property M invariably precedes mental property M*. But M is supervenient upon physical property P and M* supervenes on physical property P*. If P* is causally dependent on P there would be no warrant for asserting that M is ‘the cause’ of M*. (Open arrows designate causal dependence; hatched arrows indicate dependence by supervenience.) In considering the causality of mental properties, Kim invokes two principles:

Acceptance of ‘the causal closure of the physical’ is arguably the defining characteristic of physicalism – but physicalists do not agree about how ‘the physical’ should be interpreted. In support of his rejection of over-determination, Kim cites what he calls "Edwards’ dictum" (referring to American divine Jonathan Edwards [1703-1758]): "vertical [micro/macro] determination excludes horizontal [earlier/later] determination" (ibid., p. 36). In keeping with that principle, Kim adopts a ‘synchronic’ rather than a ‘diachronic’ point of view – that is, he asserts that the mental properties of a person at a particular ‘instant’ are totally determined by the physical properties of the individual at that specific time. Kim holds that mental properties "are defined in terms of their causal roles in behavioral and physical contexts" (ibid., p. 14). He holds that if a mental property-instance can be ‘functionalized’ (that is, if one can specify what that property-instance does), then that property can (in principle) be ‘reduced’ to physical properties – in the sense that the specific physical properties that are the ‘realizers’ of that mental property could possibly be identified (ibid., p. 24). "If pain can be functionalized in this sense, its instances will have the causal powers of pain’s realizers" (ibid., p. 25). After discussing several lines of evidence, Kim concludes: "there is reason to think that intentional/cognitive properties are functionalizable" (ibid., p. 27). In context, this amounts to the denial that such mental properties can properly be said to be causes. On this basis, Kim considers that what might seem to be mental-properties acting as causes are merely demonstrations of the causal efficacies of underlying non-mental realizers of those mental properties. Kim has a somewhat different interpretation of "qualitative states of consciousness" (‘qualia’) (ibid., p. 168). He holds that: Intrinsic properties of qualia are not functionalizable and therefore are irreducible, and hence causally impotent. They stay outside the physical domain, but they make no causal difference and we won’t miss them. In contrast, certain relational facts about them are detectable and functionalizable and can enjoy causal powers as full members of the physical world. [Ibid., p. 173] Since this interpretation relegates qualia to

the

status of epiphenomena, Kim’s approach, though not quite as reductive

as is possible, qualifies as physicalism "near enough". 3. Causal SeepageNed Block (2003) pointed out that the reasoning that reduces mental causation to a lower (e.g., biological) level can also reduce that lower level (e.g., biology to chemistry) – and so on indefinitely. That is, causation ‘seeps away’. Kim responded: The mental […] will not collapse into the biological […] for the simple reason that the biological is not causally closed. The same is true of macro-level physics and chemistry. It is only when we reach the fundamental level of microphysics that we are likely to get a causally closed domain. (Footnote 34) [Kim 2005, p. 65] The footnote that appears at this crucial juncture reads: Actually various complications arise with the talk of levels in this context. In the only levels scheme that has been worked out with some precision, the hierarchical scheme of Paul Oppenheim and Hilary Putnam (1958), it is required that each level includes all mereological aggregates of entities at that level (that is, each level is closed under mereological summation). Thus, the bottom level of elementary particles, in this scheme, is in effect the universal domain that includes molecules, organisms, and the rest. [Ibid., p. 65] Perhaps surprisingly, Oppenheim and Putnam[3]

did not present evidence, or argue, for the

existence of a fundamental level such as Kim describes – they simply

assumed it: "[reduction requires…] the (certainly true) empirical

assumption that there does not exist an infinite descending chain of

proper parts, i.e. a series of things x1, x2,

x3, such that x2 is a proper part of x1,

x3 a proper part of x2, etc." (Oppenheim

& Putnam 1958, p. 7) Kim agrees: "The core of contemporary

physicalism is the idea that all things that exist […] are bits of

matter and structures aggregated out of bits of matter" "in a physical

world, a world consisting ultimately of nothing but bits of matter

distributed over space-time" (Kim 2005, pp. 149, 7). It seems that

Kim’s physicalism could be paraphrased as: ‘if an event of any sort has

a cause at t, then it has only an elementary-particle-level

cause at t’. 4. Alternatives to ‘Strict Physicalism’Kim recognizes that his reductive version of physicalism challenges strong and widespread intuitions: "The possibility of human agency, and hence our moral practice, evidently requires that our mental states have causal effects in the physical world". (Ibid., p. 9) Several varieties of non-reductive physicalism (e.g., Antony 2007) set out to show (contrary to the conclusion of reductive physicalism) how mental properties can be causes, even given the underlying causal mechanisms described by science. Non-reductive physicalists generally recognize that explanations offered by ‘the special sciences’ (such as chemistry, biology, neuroscience, and experimental psychology) are sufficiently ‘physical’ to be accepted – and do not concern themselves with whether those accounts can be reduced to elementary-particle physics. Kim sums up the central postulate of the non-reductive physicalist outlook (which he calls ‘property dualism’) as: "The psychological character of a creature may supervene on and yet remain distinct and autonomous from its physical nature" (ibid., p. 14). In Kim’s opinion, "this seductive doctrine turns out to be a piece of wishful thinking" (ibid., p. 15). He holds that all non-reductive versions of physicalism imply ‘downward causation’ – that upper-level (e.g. mental) properties influence underlying (e.g., elementary-particle-level) properties – but he claims that no convincing explanation has yet been offered of how upper-level properties or entities might possibly have effects on lower-level events or individuals. In what follows, I refer to the claim that downward causation has not yet been convincingly demonstrated as ‘Kim’s Challenge’. Robin Hendry (2006) has considered the possibility of downward causation in chemistry. He is mainly interested in quantum-chemical accounts of chemical bonding in molecules. Hendry points out that, in such calculations, the gross molecular structure (atomic connectivity) is generally not a result of quantum-chemical calculations but is put in as an initial assumption based on chemical experience – and that no evidence or argument shows how to avoid invalidating the reductionist project by making such assumptions. He concludes that, with respect to its attempt to demonstrate that downward causation does not occur in chemistry, "strict physicalism fails, because it misrepresents the details of physical explanation". Hendry does not discuss complex reaction systems, such as those that are involved in brain function which inspired the postulation of downward causation by neuroscientist Roger Sperry (1986). Kim accepts Block’s concept that causation ‘seeps away’ from the level of human mental properties to lower levels. If causal seepage applies at and below the level of human persons it would seem to be (quasi-Cartesian) anthropocentrism to hold that causal seepage does not apply to coherences that humans (and human activities) comprise.[4] To the extent that Kim defeats Block’s causal seepage argument by establishing that (only) elementary-particle-level explanations account for mental functioning, he shows that elementary-particle-level explanations must also suffice for economics and international politics. Remarkably, reductive physicalists display little interest in detailed results of high-energy physics; and both schools of business and institutes of public policy also ignore that field. It seems that additional sorts of understanding must be necessary to connect physicalist philosophy of mind with ordinary human concerns. Perhaps Kim has discarded something critically important in disposing of the water used in the anti-Cartesian bath.[5] Kim considers that the kind of reductive physicalism that he and others have developed "is a plausible terminus for the mind-body debate" (Kim 2005, p. 173), but he recognizes that continuing disagreement among physicalists suggest that deep issues remain:[6] What is new and surprising about the current

problem

of mental causation is that it has arisen out of the very heart of

physicalism. This means that giving up the Cartesian conception of

minds as immaterial substances in favor of a materialist ontology does

not make the problem go away. On the contrary, our basic physicalist

commitments […] can be seen as the sources of our current difficulties.

[Ibid., p. 9] 5. Philosophy of Mind Needs Philosophy of ChemistryPaul Humphreys (1997, p. 15) and David Newman (1996) separately suggested that relationships between ‘physical’ and ‘mental’ events and entities will not be understood without improved philosophical understanding of ‘multilevel’ coherences considered by ‘more basic sciences’. More recently, Brian McLaughlin observed (2007, p. 205): whether all chemical truths are a priori deductible from physical truths is an issue that is unresolved. One would think that this would be a good place for a priori physicalists to start in making the case that all special science truths are epistemically implied […] But there is, to my knowledge, no discussion of this case in the a priori physicalist literature. This paper considers three presuppositions of reductive physicalism:

The claim being made here is that each of these three presuppositions corresponds to an opportunity for philosophy of chemistry to make a significant contribution toward resolving currently open issues in the philosophy of mind. This section of the paper sketchily identifies these three opportunities, while subsequent sections deal with each of them separately. The first presupposition calls attention to the currently unsatisfactory state of ‘mereology’ – the branch of logic that deals with wholes and parts. Current versions of mereology cannot successfully deal with chemical combination. Human mental function is determined by coherences that have much more complexity than do chemical molecules. Development of an extension of mereology that could deal adequately with chemical combination would be a major contribution towards a more adequate philosophy of mind. Kim’s interpretation of his second presupposition requires that upper-level sciences (and the entities they recognize) be ‘reducible’ to elementary-particle microphysics – the lowest-level science (presumed to be ‘fundamental’). However, contemporary physicists have found that structural levels are often separated by certain ‘singularities’, so that in many cases upper-level properties are not sensitive to variation in lower-level properties. Rigorous treatment of whether well-understood chemical systems exemplify such explanatory discontinuities would have important impact on the philosophy of mind. Kim’s choice of a ‘synchronic’ rather than a

‘diachronic’ approach to causation is inconsistent with the

circumstance that human mental functioning necessarily involves

networks of processes that have many different characteristic

time-parameters. Chemists who work with quantitative models of open,

non-linear, dynamic chemical systems should be able to provide a

rigorous account of how ‘downward causation’ operates in such systems

and adequately respond to ‘Kim’s Challenge’. We now examine each of

these three research programs in further detail. 6. ‘Bits of Matter’Kim’s fundamental insight that "all things […] are bits of matter and structures aggregated out of bits of matter" (Kim 2005, p. 149) suggests that some ‘bits of matter’ are not aggregates – that is, that ‘simples’ exist. The notion that all valid explanation must ultimately rest on a level of submicroscopic ‘elementary’ (i.e., non-composite) constituents (Kim’s bits of matter) has long been a presupposition of much science and philosophy. Herman Weyl (1949, p. 86) gave a clear statement of this approach: "Only in the infinitely small may we expect to encounter the elementary and uniform laws, hence the world must be comprehended through its behavior in the infinitely small." The first half of the twentieth century was a golden age for this approach. By the 1930s, chemists and physicists had produced adequate rough explanations of the chemical periodic table, of much organic chemistry, of the internal structure of atoms, and of aspects of the make-up of the atomic nucleus – using only a few kinds of ‘elementary particles’. Correspondingly, Bertrand Russell’s philosophy of ‘logical atomism’ and its development by Ludwig Wittgenstein found an appreciative audience. When physicalism became dominant (during the mid-twentieth century) it was generally assumed that a fundamental level of non-composite entities exists, but that assumption is now questionable. Physics Nobel laureate Hans Dehmelt (1990, p. 539) wrote: Although no atom smasher has yet succeeded in cracking the electron apart and revealing a structure […] it is far from implausible that, like Democritus’s atom and Dirac’s point proton before, Dirac’s point electron and even its components will turn out to be composite in a never-ending regression. In contemporary physics, items that function

as

‘elementary’ entities in some context (e.g., in a particular

energy range) generally turn out to behave as aggregates in other

contexts. Protons and neutrons – usually taken to be ‘elementary’ in

the 1950s – are now seen as composed of quarks. Quarks and leptons

themselves are no longer regarded as ‘simples’ – although there is no

agreement as to whether their composition should be understood in terms

of ‘strings’ or ‘prolons’, or in some other way. The current

understanding of microphysics does not support Weyl’s notion of the

existence of ‘elementary and uniform laws’ and provides no basis for

the assumption that all scientific understanding is necessarily

reducible to some ‘fundamental’ submicroscopic level of description.

Kim’s micro-physicalist insight should be regarded as a philosophical

postulate, not a scientific conclusion.[7]

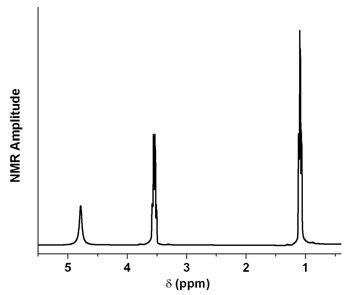

As is well

known, Wittgenstein reconsidered his earlier atomistic position

(1953/1967, p. 47): "But what are the simple constituent parts of which

reality is composed? […] we use the word ‘composite’ (and therefore the

word ‘simple’) in an enormous number of ways." 7. Mereology and StructureWhether or not there are any truly ‘elementary’ units, it is a valid question whether (and if so, how) "structures aggregated out of bits of matter" (Kim 2005, p. 149) could have ontological significance. Kim’s approach builds on ‘mereology’ – the part of philosophical logic that deals with ‘wholes and parts’. As David Lewis (1999, p. 1) puts it: Mereology is the theory of the relation of part to whole and kindred notions. One of the kindred notions is that of a mereological fusion, or sum: the whole composed of some given parts. […] The fusion of all cats is that large, scattered chunk of cat-stuff which is composed of all the cats there are and nothing else. It has all cats as parts. Standard mereology holds that any two or more individuals (of whatever sort) constitute a mereological ‘sum’ or ‘fusion’ – but inclusion in such a fusion in no way modifies the individuals so included. Standard mereology considers that an entity is the same whether it is a part of some whole or uncombined. Mereological fusions do not have causal effectiveness separate from that of their parts. Arthur Fine (1994, p. 138) points out an important feature of current mereological theory. Under the forms of nominalism championed by Goodman […] there can be no difference in objects without a difference in their parts: and this implies that the same parts cannot, through different methods of composition, yield different wholes. Even though all sciences are greatly concerned with ‘structure’, standard mereology cannot deal with ‘structured’ wholes. Structured wholes, such as chemical molecules, generally have causal efficacy in virtue of their ‘connectivity’– in addition to the causal powers of their constituent atoms. (Levorotatory amino acids are nutritious, corresponding dextrorotatory amino acids are poisonous – although both sorts of molecules have exactly the same component parts.)[8] Inclusion in a chemical molecule clearly causes significant changes in each of the components that are so included. For example, organic molecules generally contain many hydrogen centers (‘protons’[9]). Ethyl alcohol (CH3CH2OH) has five hydrogen centers (‘atoms’). Those hydrogens are not all the same, however. There are three distinct sorts of hydrogen centers in the ethanol molecule. If a sample of ethanol is placed in a magnetic field and subjected to appropriate radio-frequency radiation of varying frequency, energy may be absorbed in bringing about a change in the relationship between the ‘spin’ of a proton (hydrogen nucleus) and direction of the imposed magnetic field – what is sometimes called a ‘spin-flip’. In such experiments, sharp absorption bands are observed at three separate frequencies (‘chemical shifts’) corresponding to differing detailed characteristics of the electromagnetic environments that characterize the several types of hydrogen nuclei within the ethanol molecule. The intensities of those three absorptions correspond to the numbers (1 and 2 and 3) of hydrogen centers of each of the three structural types that are present in ethanol (see Figure 1).

Figure 1: The proton nuclear magnetic resonance (NMR) spectrum of ethyl alcohol (CH3CH2OH) dissolved in deuterium oxide (D2O), showing a clear separation of signals corresponding to reorientation (with respect to an external magnetic field) of the spins of three types of protons (hydrogen elemental centers) that are present in the ethanol molecule. Each of these types corresponds to a different ‘connectivity’ (a particular situation in the three-dimensional structure of the molecule). The areas under the peaks vary in 1/2/3 ratios that correspond to the numbers of hydrogen centers of the three types. Figure courtesy of Prof. YuYe Tong. Those hydrogen centers are strongly influenced by being parts of the ethanol molecule, and by the details of their relative locations in the molecule. (Kurt Wüthrich earned the Nobel Prize in Chemistry 2002 by working out detailed three-dimensional structures of proteins using NMR techniques that exploit differences caused by the structural relationships of parts within protein molecules.) In general, chemical entities are quite different as parts than they are when uncombined (Earley 2005). The ‘mereological monism’ (Fine 1994, p. 138) that Kim assumes certainly does not apply to chemical combination. Since mental functioning involves even more complex coherences, standard mereology is inadequate in that field as well. Kim’s treatment of ‘qualia’ (mentioned in Section 2) seems to require an extension of standard mereology. Kim’s statement that "certain relational facts about them (qualia) are detectable and functionalizable and can enjoy causal powers as full members of the physical world" (Kim 2005, p. 173) requires that ‘relational facts’ be essential aspects of effective aggregates. This requirement seems consistent with Fine’s point (Fine 1999, pp. 63-64) that the notion of ‘between’ must be part of any adequate description of a ham sandwich (‘some ham and two pieces of bread’ will not do!), but that proviso does not seem consistent with standard mereology. Standard mereology claims to deal with

‘wholes and

their parts’ but fails to deal with structured wholes:

mereology clearly needs further development. In physical chemistry, the

model of ‘the ideal gas’ assumes that, in gaseous substances,

individual molecules occupy no space, and that no forces operate

between molecules in gases. These assumptions lead to a well known

formula P V = n R T (where R is a constant,

and n specifies the quantity of gas in units of ‘moles’). This equation

is often useful as an approximation to the relationship between

pressure (P), volume (V), and temperature (T) for gases – at least for

some gases under certain conditions. However, to deal with many real

gases over a range of conditions, one must take account of the facts

that molecules of gases do have finite size and that forces in fact

operate between gas molecules. These recognitions lead to the

derivation of somewhat more complicated (but much more widely

applicable) ‘equations of state’, such as the van der Waals equation.

Present versions of mereology assume that component parts are not

changed when they are included in wholes. This assumption is analogous

to those that are made in the ‘the ideal gas’ model. An extended

mereological system that would be able to deal with chemical

combination and with more-complex structured coherences would relate to

standard mereology as treatments of real gases relate to the theory of

the ideal gas. Development of one or more such extended mereologies

would be a proper task for philosophy of chemistry – and would greatly

benefit philosophy of mind.[10] 8. Which ‘Closure’? What ‘Physical’?Kim is a firm advocate of ‘closure of the physical’, but restricts that closure to the microphysical level of elementary particles. Alisa Bokulich (2007) reports that Werner Heisenberg held that classical mechanics is a ‘closed theory’: "a tightly knit system of axioms, definitions, and laws that provides a perfectly accurate and final description of a limited domain of phenomena" (Bokulich 2007, p. 91). According to Heisenberg, relativity theory did not ‘falsify’ classical mechanics, but rather showed the limits of the domain within which classical mechanics applies. If nature were to consist of several such ‘closed’ causal domains, ‘the physical’ would have many types of ‘closure’ – and many types of coherence would have real causal efficacy.[11] In contrast to that pluralist view, reductive physicalism advocates continuation of a scientific approach that has had many successes. Investigation of relatively simple systems led to detection of components of progressively smaller size – many of the insights we now have into the internal structure of atoms came from the study of the hydrogen atom and the dihydrogen molecule. Hans Post (1971, p. 237) pointed out: "Once a scientific theory has proved itself to be useful in some respects […] it will never be scrapped entirely".[12] The reductionist strategy clearly will continue to be used wherever it is appropriate – but reduction should not be regarded (as Kim seems to do) as the only appropriate strategy for science. Richard Batterman (2002, 2005, 2006) described two contrasting explanatory strategies that are commonly used in contemporary science. Explanation of why a specific instance of a pattern obtains generally requires a ‘causal-mechanical’ account, in terms of the details of processes that produce that instance. Specific characteristics of the interactions involved are critically important for causal-mechanical accounts. On the other hand, accounting for why it is that patterns of a given type tend to occur requires quite a different explanatory strategy – one that concentrates on factors that unify diverse examples of those patterns, and abstracts from details of specific examples. The second strategy is often required for dealing with problems that involve several structural ‘levels’. Transition from a lower level to an upper level frequently involves a ‘singularity’ – a situation for which calculated quantities increase without limit. Such singularities appear, for example, when ‘correlation effects’ become dominant as myriads of paramagnetic ions adopt the same electron-spin orientation in a magnetic phase-change. In such cases, causal-mechanical explanation does not work, but the alternate mode of treatment can be fully effective. It sometimes happens that results achieved by the second strategy involve recognition of entities that are composites from the point of view of lower-level theory but appear as ‘fundamental units’ in higher-level treatments.[13] Batterman provides detailed discussions of situations in which a higher-level ‘emeritus’ theory (e.g., geometric optics, hydrodynamics) must be invoked to explain phenomena (e.g., structure of rainbows, formation of water droplets) that cannot be treated by a ‘successor’ theory (e.g., the wave theory of light, molecular dynamics) to which the emeritus theory has been formally ‘reduced’. It is very likely that similar situations arise in mental function. Robert Knight reports that a consensus has been reached with respect to the normal activity of human brains. "It is now widely agreed [that] the understanding of [neuronal] network interactions is key to understanding normal cognition" (Knight 2007, p. 1579).[14] It has been known for some years that the behavior of artificial ‘neural networks’ does not depend on whether the ‘unit neurons’ have linear or logarithmic response. Levina et al. (2007) recently showed that large-scale neural networks composed of ‘dynamic synapse-models’ display robust ‘self-organized criticality’ – a behavior-pattern characteristic of large-scale dynamic systems. For such devices, network structure determines behavior and the detailed nature of lower-level units is relatively unimportant. Clearly, any adequate philosophy of mind must be able to deal with the emergence of upper-level regularities that are not completely determined by properties of microscopic components. Once main features of the internal

composition of

objects had become clear, much scientific interest shifted to

understanding how the present state of the universe arose from less

complex antecedents. Search for understanding of how structuring of

lower-level entities (that are themselves composites) can lead to

higher-level coherences that have novel causal effectiveness seems to

be more characteristic of present scientific activity than is reduction

to elementary units. Both in the descent of analysis of composition and

in the synthetic ascent of cosmogenesis, nature has turned out to be

highly stratified – not continuous, but displaying many structural

levels. And transitions from one level to another are not always

straightforward, as Kim’s approach seems to require that they be.

Although interactions of several particles (a lower-level problem) are

generally mathematically intractable, experiments on macroscopic

samples (upper-level situations) produce precise values for the mass

and charge of the electron that are now officially accepted as world

standards. How can such an inversion of expectations be possible? How

is this phenomenon to be understood? Physicists working in these active

research areas conclude that systems that involve large numbers of

independent particles are often governed by ‘higher organizing

principles’– and behave as if the detailed natures of lower-level

components are quite unimportant. For instance, low-energy acoustic

properties of crystalline solids are not dependent on the

nature of the components of the crystal but only depend on

characteristics of the crystal structure that are the same irrespective

of what the nature of the components parts of that structure might be.

The crystalline state is the simplest example of what is known as a

‘quantum protectorate’: "A stable state of matter whose generic

low-energy properties are determined by a higher organizing principle

and nothing else" (Laughlin & Pines 2000a, p. 29).

Philosophers and others may find it surprising that upper-level

behavior may be insensitive (within wide limits) to details of

lower-level arrangements, but much of modern physics deals with

phenomena (such as superconductivity, superfluidity, and the Hall

Effect) that are substantially independent of lower-level

properties. Remarkable features of the behavior of macromolecules

suggest that ‘protectorates’ may exist at the chemical level (Laughlin et

al., 2000b) and prevent reduction of chemistry to physics (see also

Mattingly 2003). Putting this suggestion on a philosophically sound

basis, or convincingly refuting it, would be a major contribution to

the philosophy of chemistry and important to the philosophy of mind.

Any present-day interpretation of what should count as ‘physical

causes’ must take into account that in many cases, properties of

complex coherences are independent of the properties of the components

of those coherences – and the brain is certainly complex in the

relevant sense. 9. Diachronic, Si! – Synchronic, No!Kim uses Jonathan Edward’s notion that vertical determination (from the microscopic to the macroscopic) supersedes horizontal (earlier to later) causality in arguing that no event has more than one cause – and as a basis for his synchronic (rather than diachronic) approach to mental causation. That principle was consistent with Edwards’ theological doctrine that God re-created the world de novo at each instant. It is more difficult to understand how Kim finds that his synchronic approach[15] is consistent with current scientific understanding.[16] We now understand that each and every entity is involved in many temporal processes at once: vibrations, rotations, translations, chemical interactions, metabolic processes, integration and disintegration of patterns of neuronal activity, reproductive and economic strategies, political relationships, etc. Each of these interactions has characteristic time-parameters that describe how that particular process develops sequentially. A complex biological organism, and perforce a human person, is influenced by myriads of causal processes (internal and external) that have characteristic time-parameters ranging from attoseconds to years (or more). The notion of the state of a system ‘at an instant’ is a high abstraction – and it is not at all clear that that abstraction can be coherently applied to human minds. No description of a real object can be complete – some features must be omitted. Any understanding of a particular individual or process must select a specific time-scale – whether attoseconds or minutes. Any selected time range would focus attention on some aspects of the overall individual or process being investigated and shift other aspects beyond a ‘horizon of invisibility’ so that differences in those aspects cannot be detected. Clearly, the mental state of a particular person at a given time depends on the then-current arrangement of microscopic components – such as inter-neuronal synapses. But all those arrangements (e.g., connectivity and strength of each synapse) derive their characteristics from prior events (Churchland 2007). Such ‘horizontal’ interactions account for why the state of the system is as it is – and in that sense merit the designation as ‘causes’ – even though their operation may lie outside the horizon of invisibility of a particular investigation. Contemporary scientific explanations operate at temporal levels differing by factors as large as 1030. The time ranges relevant to human mental functioning stretch from fractions of microseconds to years or more. Merlin Donald (2001, p. 12) claims that non-instantaneous influences are especially significant in the case of human minds: We have evolved into "hybrid" minds, quite unlike any others, and the reason for our uniqueness does not lie in our brains, which are unexceptional in their basic design. It lies on the fact that we have evolved a deep dependence on our collective storage systems, which hold the key to our self-assembly. Anti-individualistic objections (Segal 2007, p. 5) to Kim’s doctrine that elementary-particle interactions are the sole determinants of mental properties point out that states of human mind depend on many factors that Kim’s system does not recognize, such as "the socio-linguistic environment". As Marjorie Grene (1978) observed: In its natural as well as cultural aspect, humanity is something to be achieved, and the person is the history of that achievement. […] To be potentially a person ‘is to have the capacity to operate with symbols, in such a way as it is one’s own activity that makes them symbols and confers meaning upon them’.[17] To be actually a person is to be engaged in the process of acquiring and exercising this ability. […] We don’t just have rationality or language or symbol systems as our portable property. We come to ourselves within symbol systems. They have us as much as we have them. Taking time more seriously than Kim proposes

should

open routes to possible responses to Kim’s Challenge to elucidate how

downward causation might be possible. Biologists routinely describe

cases that seem relevant to that challenge. Certain tropical birds

carry genes that determine (cause, in one sense) that males of those

species are brightly colored and that they use elaborate

‘dance-sequences’ to impress dun-colored females. But ‘closure’ of that

reproductive strategy (‘lekking’) also determines (causes) which

genes are carried by those birds. (Dun-colored or non-dancing males

have no progeny.) The high productivity of tropical ecosystems is what

allows lekking – an effective means of population limitation – to be an

‘evolutionary stable strategy’ persistent across many generations in

those ecosystems. Such ecological closure has consequences, that is,

closure has causal power. 10. Process Structural RealismPhilosophical problems raised by microphysics have inspired a revival of ‘structuralist’ approaches in philosophy of science. These approaches include both realist (French 2006) and empiricist (van Fraassen 2006) formulations.[18] Structures – arrangements with automatic self-restoration after disturbance – are the main subjects of investigation in most areas of science. Many structured coherences can persist in ‘closed systems’ isolated from their environments. A diamond is a structure of carbon centers which expands or contracts if heated or cooled – but resumes its previous dimensions when returned to its original temperature. Diamonds may be kept in a locked safe indefinitely without any detectable change. Such stable arrangements generally correspond to configurations that have lower ‘thermodynamic free energy’ than any other possible arrangements of the same components: they are therefore designated ‘equilibrium structures’. However, in order to deal with complex systems such as living organisms, another sort of structure must be recognized (Earley 2008a). ‘Dissipative structures’ are self-restoring (stable) coherences that arise and persist in ‘open’ systems (which exchange material and/or energy with their surroundings) under conditions that are quite different from those that correspond to (‘thermodynamic’) equilibrium (Kondepudi & Prigogine 1998). Many of the coherences that are centrally important to human mental functioning are clearly dissipative structures. For instance, Lakatos et al. (2008) have shown that primates select among possibilities for the focus of their attention using a mechanism that involves entrainment of neuronal oscillations – those sustained oscillations clearly indicate that dissipative structures are involved in those primate brain activities. Important characteristics of dissipative structures are quite different from what might be expected on the basis of intuitions shaped by experience with equilibrium structures (Earley 2008b). Fortunately, main features of dissipative structures can be studied experimentally in relatively uncomplicated chemical systems that can be described by straightforward mathematical models (e.g., Schreiber & Ross 2003, Stemwedel 2006). Such experiments and models often deal with processes in a ‘continuously stirred tank reactor’ (CSTR) – a chamber (of constant volume and controlled temperature) into which solutions that contain reactants (say X and Z) are pumped, and from which excess material exits (Earley 2003a). Independent variables in such experiments include the input concentrations Xo and Zo and the pump-rate ko (see Scheme II).

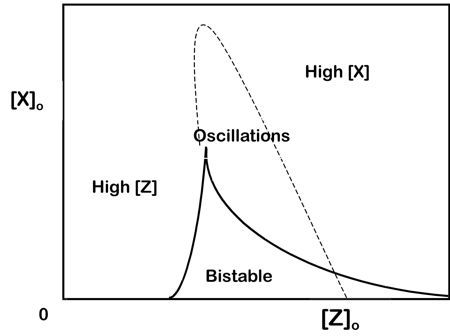

Scheme II: Mechanism for a basic type of chemical oscillator (‘type 2C’ of Schreiber & Ross 2003). Brackets denote concentrations: k and k’ are ‘rate constants’; ko is pump rate; subscript o indicates feed concentrations. The reaction shown on the first line is the autocatalytic production of X from Z. The second reaction is the spontaneous decomposition of X to un-reactive by-products. The third and fourth lines represent pumped inflow and exit of X and Z (the reactant and product).

Figure 2: ‘Bifurcation diagram’ for a model of a basic type of oscillating reaction that is shown in Scheme II. On the left, a non-equilibrium steady-state that resembles the reactants (high Z concentration) is stable. On the right, a steady state that resembles the products (high X concentration) is stable. In the center of the figure (within the dotted curve) both steady states are unstable and continual oscillations occur. In the region labeled ‘bistable’ both steady states are stable – the system may be in either state, depending on its history. Redrawn after Schreiber & Ross 2003. When the mechanism shown in Scheme II operates, under some conditions the contents of the CSTR eventually reach a ‘stable steady state’ that resembles the input concentrations: [Z] is high and [X] is low. Under other conditions the CSTR reaches a stable steady state that is quite different from the input conditions (most of the Z has been converted to X). In some intermediate conditions (often covering a fairly wide range of the variables), neither of these two non-equilibrium steady states is stable. Instead, the system oscillates repeatedly from a condition close to one steady state to a condition close to the other steady state (see Figure 2 for ‘bifurcation diagrams’ that correspond to scheme II). Such oscillations can continue indefinitely (Earley 2003b). If the system is disturbed during the oscillation (by a one-time addition of more X, say), the system will return to the same oscillatory pattern. The system is stable to perturbations and meets the definition of a ‘structure’. It repeatedly follows a closed sequence of states, that is, it ‘moves on’ a closed ‘limit cycle’ in a plane defined by two dependent variables. That sequence of states defines a stable structure of processes that may be quite insensitive to perturbations. The structure is ‘dissipative’ since the chemical free energy of the product (X) is less than that of the reactant (Z), and the excess free energy produced in the reaction is dispersed into the environment. Living organisms (like other dissipative structures) carry out their ordinary activities using the energy provided by the free energy difference between what they take in and what they excrete (Earley forthcoming a). Incidentally, human brains dissipate energy at an especially rapid rate. Once a system has attained a stable oscillatory condition, properties of that oscillatory regime become well defined. Such properties include the frequency of the oscillations, the detailed shape and amplitude of the variations, and the average values of the independent concentration variables (say, [X]average and [Z]average). Those average values are quite different from the corresponding concentrations that prevail in the input stream, or that characterize either of the two possible non-equilibrium steady states. For any test system (Earley 2003c) that responds with a time-constant that is less (slower) than the period of the oscillation, the properties of the system will be the time-averaged values.[19] The closure of the network of chemical and physical processes that define the dissipative structure (Earley 2000) brings about a real and measurable change in concentration variables that are the participants in the reactions which make up the closed reaction system. This is an example of ‘downward causation’ in a well-understood chemical system. The closure of networks of (upper-level)

relationships in open systems of nonlinear chemical reactions gives

rise to sustained oscillations that dramatically change effective

concentrations of chemical components – the same components that are

the active participants in the reactions that are combined. These

effects are formally analogous to biological examples (such as the

dancing birds) in which upper-level coherence influences lower-level

entities. The mathematics that describes those chemical systems is

similar to formalisms that represent the biological examples, but the

chemical cases are more amenable to quantitative modeling. Quantitative

modeling of complex networks of time-dependent chemical processes

should be able to clarify conditions in which ‘downward causation’ may

be shown to operate on the chemical level – and thereby earn whatever

reward may be on offer for meeting Kim’s Challenge. 11. ConclusionThis paper suggests three research programs:

The three approaches outlined above are clearly connected with each other and relate to deep and long-standing philosophical issues, such as whether ‘relations’ between and among two or more individuals (‘polyadic’ properties) can be reduced to characteristics of those individuals singly (‘monadic’ properties). Charles S. Peirce (1903/1997) (whose undergraduate studies were in a school of chemistry and who worked as a consulting chemical engineer in his last years) defended the thesis that polyadic properties cannot be reduced to monadic properties. He maintained the ‘realist’ position that what he called ‘firstness’, ‘secondness’, and ‘thirdness’ were all fundamental and irreducible – and he opposed ‘nominalists’ who claim that polyadic properties can be reduced to monadic and dyadic ones. Kim’s reductive physicalism and standard mereology clearly adopt the nominalist approach that Peirce decried, asserting as they do that mental properties are reducible to properties of postulated elementary particles. This position corresponds to what was once a generally accepted consensus, but there does not seem to be a firm basis in current science favoring that position – though prior metaphysical commitments may suffice for many. The contrasting realist position that Peirce endorsed recognizes polyadic relationships (involving upper-level individuals studied by chemistry and other special sciences) as real, significant, and irreducible. This approach has the pragmatic advantage that it makes contact with current brain science (e.g., Lakatos et al 2008, Levina et al. 2007) in a way that strict physicalism cannot. It is hard to imagine a feature of contemporary brain/mind scientific investigation for which elementary-particle microphysics would have direct relevance. O. B. Hardison, Jr. (1989, p. 5), a poet who was Director of the Folger Shakespeare Library in Washington, adapted the notion of ‘horizon of invisibility’ from astrophysics – and pointed out how analogous phenomena occur in science, technology, and other aspects of human culture. A horizon of invisibility cuts across the geography of modern culture. Those who have passed through it cannot put their experience into familiar words and images because the languages they have inherited are inadequate to the world they inhabit. They therefore express themselves in metaphors, paradoxes, contradictions, and abstractions rather than languages that "mean" in the traditional way – in assertions that are apparently incoherent, or collages using fragments of the old to create enigmatic symbols of the new. The most obvious case in point is modern physics, which confronts so many paradoxes that physicists like Paul Dirac and Werner Heisenberg have concluded that traditional languages are, for better or worse, simply unable to represent the world that science has forced upon them. [¼ ] Many people have already passed beyond the barrier separating the phases of modern culture. They are different – odd – perhaps like the converts of the fourth century A.D. who crossed between pagan and Christian culture. The only way these converts could express their experience was through paradoxes and impossibilities. It seems that Kim and other reductive

physicalists

are on the other side of a horizon of invisibility from those that

investigate the self-organizing dynamic systems that brain function

involves. It is a main task of philosophy of science to mediate between

rapidly advancing fields of scientific investigation and the general

understandings (worldviews) that are widespread in society. The

favorable reception non-reductive versions of physicalism have gained

among philosophers is encouraging in this respect. Much important work

remains to be done in exploring the general philosophical relevance of

recent progress in chemical understanding – so that, perhaps in some

happy future, chemistry (and particularly far-from-equilibrium chemical

dynamics) will no longer lie beyond a horizon of invisibility for most

educated people, as it now does. AcknowledgementsThis research was supported, in part, by a

grant

from the Graduate School of Arts and Sciences of Georgetown University.

The author is grateful to an anonymous referee for insightful and

constructive comments, to Prof. YuYe Tong for Figure 1, to Prof.

Michael Weisberg for calling attention to the work of Richard

Batterman, to Prof. Richard Boyd for helpful comments on an early

version of Earley 2006, and to Prof. Boyd and Prof. Karen Bennett and

the other members of the Cornell Metaphysics Seminar for hospitality

and helpful discussion. Notes[1] Earlier versions of this paper were presented at the Eleventh Summer Symposium of the International Society for the Philosophy of Chemistry at the University of San Francisco in August, 2007 and in the graduate metaphysics seminar ‘The Nonfundamental: Aggregation, Causation, Dependence’ at Cornell University on April 15, 2008. [3] Hilary Putnam has changed his opinion more than once in the five decades since Oppenheim & Putnam 1958 was written. Currently, he strongly advises against including ontology in ethical discussions (Putnam 2005). [4] Individual biological organisms are functionally integrated collections of smaller items (organs, cells, etc.). Such individual organisms may also function as components of functionally integrated larger units, such as the colonies of the social insects. The evolution of human culture has been characterized by the emergence of progressively more complex patterns of integration of the activities of individual humans into cultural, economic, and political institutions. The general term ‘coherence’ is used in this paper to refer to any functionally integrated unit that is made up of more or less independent components (which themselves are composed of yet other parts). [5] Alfred North Whitehead observed: "There persists throughout the whole period [from ~1600 to the present] the fixed scientific cosmology which presupposes the ultimate fact of an irreducible brute matter, or material, spread throughout space in a flux of configurations. […] it is an assumption which I shall challenge as being entirely unsuited to the scientific situation at which we have now arrived. It is not wrong, if properly construed. If we confine ourselves to certain types of facts, abstracted from the complete circumstances in which they occur, the materialistic assumption expresses these facts to perfection. But when we pass beyond the abstraction, either by more subtle employment of our senses, or by the request for meanings and for coherence of thoughts, the scheme breaks down at once. The narrow efficiency of the scheme was the very cause of its supreme methodological success. For it directed attention to just those groups of facts which, in the state of knowledge then existing, required investigation" (Whitehead 1925/1967, p. 17). [6] The debate between reductive and non-reductive physicalists calls to mind the problem that 13th-century ‘Scholastics’ faced in integrating new conceptual schemes (the then recently available Aristotelian hylomorphism) with understandings of human action to which they had strong prior commitments. The solution that Thomas Aquinas proposed was: "intellectual substances are not composed of matter and form; rather, in them form itself is subsisting substance" (Aquinas 1259/1955, bk. II, chap. 54, § 7). This doctrine can be seen as a bridge between earlier Neo-Platonic speculations and later early-modern Cartesian dualism. Kim’s reductive physicalist doctrine involves an assumption that is similar in form to what Aquinas held. Kim’s view can be paraphrased as: ‘in physical substance, structure is not required, but matter is subsisting substance’. [7] The "Eleatic Principle" (also known as "Alexander’s Dictum") specifies: "everything that we postulate to exist should make some sort of contribution to the causal/nomic order of the world" (Armstrong 2004, p. 37). On that basis, whatever has no causal powers in addition to those of its components cannot properly be said ‘to exist’. Reductive physicalists deny that non-elementary entities that figure in explanations offered by the special sciences have their own causal powers; they are then ‘eliminativists’ – similar to those philosophers who deny that statues and baseballs exist (e.g., Merricks 2001). [8] Since structured wholes such as chemical molecules do have causal properties in addition to those of their constituents – by the ‘Eleatic’ standard outlined in Note 7, they ‘exist’ in a way that unstructured mereological fusions do not. [9] Such elemental centers are customarily and informally called ‘hydrogen atoms’ – but without implying that they have the same properties as uncomplexed mono-hydrogen atoms would have. To avoid misunderstanding, this paper will generally avoid this common usage. [10] Two ways of proceeding on this project could involve well-established mathematical theory. Many chemical species correspond to representations of mathematical ‘groups’ – sets that are characterized by ‘closure’. (For each group there is an operation which, when applied to a member of a group generates another member of the group, rather than something outside the group.) One motivation for the historical development of the notion of mereological fusion was to generate an ontology that was sparser than that provided by set theory. An ontology based on group theory would be sparse and would also be able to deal with structured wholes. Also, James Mattingly (2003) convincingly argued that chemical structure should be regarded as a ‘gauge’ phenomenon. He points out that the Aharanov-Bohn experiment demonstrates that the electrodynamic ‘vector potential A’ has causal power, even though that potential does not have a defined value – since an arbitrary constant may be added without effect. Mattingly suggests that chemical structures should also be treated using the resources of recently developed gauge theories and associated mathematics. [11] A pluralist, multi-level ontology (Earley 2008a, 2008b) provides an alternative to reductive physicalism that is less ‘ad hoc’ than ‘Dualist Emergentism’ (Nida-Rümelin 2007). [12] This is a corollary of Post’s "General Correspondence Principle" (GSP) which states that "any acceptable new theory L should account for the success of its predecessor S by ‘degenerating’ into that theory under those conditions under which S has been confirmed by tests" (Post 1971, p. 228). This principle is discussed by da Costa & French (2001, p. 82). [13] Batterman (2005) rejected the criticism that he had ‘reified’ emeritus-theory entities that needed to be invoked for an adequate explanation of rainbow structure. In my view, Batterman should rather have pointed out that any ontology necessarily involves such "unit-making" (Armstrong 2004). Pluralistic multi-level ontology seems well warranted in the case discussed in this exchange. [14] Knight continues: "There are also numerous psychiatric disorders, such as depression, seasonal affective disorder, mania and even some case of psychosis, that are episodic and not associated with defined neuro-anatomical damage. Might it be that some of the periodic symptoms are caused by intermittent network dysfunction, caused by disturbed oscillatory dynamics?" [15] Kim correctly observes (Kim 2005, pp. 36f.) that if a lump of brass has some micro-structural property M that causes it to be yellow at time t, it will be yellow no matter what was true at (t – Δt). This recalls the eliminativist claim that no baseball ever broke a window. Eliminativists hold that glass has often been broken by certain myriads of atoms "acting in concert" – but not by any baseball. However, those millions of millions of millions of millions of atoms just happened to act in concert, because each of those atoms was enmeshed in complex networks of chemical bonds, and in physical entanglements, with all the other atoms. Similarly each lump of brass has the properties it has (and not others) because of factors that stretch well beyond the lump itself into trade networks, foundries, and mines. [16] Kim’s position seems to be a version of ‘presentism’ – the doctrine that neither past nor future entities have causal efficacy. [17] The quotation is a development of ideas of A.J.P. Kenny. [18] A less-radical structuralist view that has been developed in the philosophy of chemistry holds that when networks of dynamic relationships among components have certain types of closure, then new causally effective entities come into being (Earley 2006). On this basis, coherences on diverse levels may or may not have ontological significance depending on their internal constitution and on the interactions in which they are involved. Such ontological novelty could occur at many levels, including (but not limited to) the level of consciousness. This view is consistent with Kit Fine’s argument (mentioned on page 10) that mereology must recognize ‘relationships’ as well parts of other sorts. Kim’s 1994 characterization of mereological supervenience recognizes relationships as worthy of special mention – but in later descriptions of supervenience he conflates relations and properties. [19] By the

‘Eleatic

principle’ discussed in Notes 7 and 8, the oscillating chemical system as

a unit is ontologically significant. For further discussion of this

important concept, see Earley 2003, 2008a, 2008b, forthcoming. ReferencesAntony, L.: 2007, ‘Everybody Has Got It: A Defense of Non-reductive Materialism’, in: B. McLaughlin & J. Cohen (eds.), Contemporary Debates in the Philosophy of Mind, Malden, MA: Blackwells, pp.143-159. Aquinas, T.: 1259/1955, Summa Contra Gentiles, ed. J. Kenny, New York: Hanover House [http://www.diafrica.org/kenny/CDtexts/ContraGentiles2.htm#54]. Armstrong, D.M.: 2004, Truth and Truthmakers, Cambridge: Cambridge UP. Batterman, R.W.: 2002, The Devil in the Details: Asymptotic Reasoning in Explanation, Reduction, and Emergence, Oxford: Oxford UP. Batterman, R.W.: 2005, ‘Response to Belot’s "Whose Devil? Which Details?"’, Philosophy of Science, 72 (1), 154-163. Batterman, R.W.: 2006, ‘Hydrodynamics versus Molecular Dynamics: Intertheory Relations in Condensed Matter Physics’, Philosophy of Science, 73 (5), 888-904. Block, N.: 2003, ‘Do Causal Powers Drain Away’, Philosophy and Phenomenological Research, 67 (1), 133-150. Bokulich, A.: 2006, ‘Heisenberg Meets Kuhn: Closed theories and paradigms’, Philosophy of Science, 73 (1), 90-107. Churchland, P.: 2007, ‘The Evolving Fortunes of Eliminative Materialism’, in: B. McLaughlin & J. Cohen (eds.), Contemporary Debates in the Philosophy of Mind, Malden, MA: Blackwells, pp. 160-181. da Costa, N. & French, S.: 2003, Science and Partial Truth: A Unitary Approach to Models and Scientific Reasoning, New York: Oxford UP. Dehmelt, H.: 1990, ‘Experiments on the Structure of an Individual Elementary Particle’, Science, 247, 539-545. Donald. M.: 2001, A Mind So Rare, New York: Norton. Earley, J.: 2000, ‘Varieties of Chemical Closure’, in: J. Chandler, & G. Van De Vijver (eds.), Closure: Emergent Organizations and Their Dynamics (Annals of the New York Academy of Science, vol. 901), pp. 122-131. Earley, J.: 2003a, ‘Constraints on the Origin of Coherence in Far-from-equilibrium Chemical Systems’, in: T. Eastman & H. Keeton (eds), Physics and Whitehead: Quantum, Process and Experience, Albany: State University of New York Press, pp. 63-73. Earley, J.: 2003b, ‘On the Relevance of Repetition, Recurrence, and Reiteration’, in: D. Sobczyñska, P. Zeidler & E. Zielonaka-Lis (eds.), Chemistry in the Philosophical Melting Pot, Frankfurt: Peter Lang, pp. 171-186. Earley, J.: 2003c, ‘How Dynamic Aggregates May Achieve Effective Integration’, Advances in Complex Systems, 6, 115-126. Earley, J.: 2005, ‘Why There is No Salt in the Sea’, Foundations of Chemistry, 7, 85-102. Earley, J.: 2006a, ‘Chemical "Substances" That Are Not "Chemical Substances"’, Philosophy of Science, 73 (5), 841-852. Earley, J.: 2006b, ‘Some Philosophical Implications of Chemical Symmetry’, in: D. Baird & E. Scerri (eds.), Philosophy of Chemistry: Synthesis of a New Discipline, Dordrecht: Springer, pp. 207-220. Earley, J.: 2008a, ‘Ontologically Significant Aggregation: Process Structural Realism (PSR)’, in: M. Weber & W. Desmond (eds.), The Handbook of Whiteheadian Process Thought, Frankfurt: Ontos, vol. 2, pp. 179-192. Earley, J.: 2008b, ‘Process Structural Realism, Instance Ontology, and Societal Order,’ in: Franz Riffert (ed.), Whitehead’s Process Philosophy: System and Adventure in Interdisciplinary Research, Discovering New Pathways, Berlin: Alber, pp. 157-173 (forthcoming). Earley, J.: forthcoming, ‘Life in the Interstices: Systems Biology and Process Thought’, in: S. Koutrofinis (ed.), Biology and Process Philosophy, Frankfurt: Ontos. Fine, K.: 1994, ‘Compounds and Aggregates’, Noûs, 28 (2), 137-158. Fine, K.: 1999, ‘Things and Their Parts’, Midwest Studies in Philosophy, 23, 61-74. French, S.: 2006, ‘Structure as a Weapon of the Realist’, Proceedings of the Aristotelian Society, 106, 167-185. Grene, M.: 1978, ‘Paradoxes of Historicity’, Review of Metaphysics, 32, 15-16. Hardison, O.: 1989, Disappearing through the Skylight: Culture and Technology in the Twentieth Century, New York: Viking Penquin. Hendry, R., 2006: ‘Is there Downward Causation in Chemistry’, in D. Baird & E. Scerri (eds.), Philosophy of Chemistry: Synthesis of a New Discipline, Dordrecht: Springer, pp. 207-220. Humphreys, P.: 1997, ‘How Properties Emerge’, Philosophy of Science, 64, 1-17. Kim, J.: 1994, ‘Explanatory Knowledge and Metaphysical Dependence’, Philosophical Issues, 5, 51-69. Kim, J.: 2003, ‘Blocking Causal Drainage and Other Maintenance Chores with Mental Causation’, Philosophy and Phenomenological Research, 67 (1), 151-176. Kim, J.: 2005, Physicalism, or Something Near Enough, Princeton: Princeton UP. Kim, J.: 2006, ‘Emergence: Core Ideas and Issues’, Synthese, 151, 547–559. Kim, J.: 2007, ‘Causation and Mental Causation’, in: B. McLaughlin & J. Cohen (eds.), Contemporary Debates in the Philosophy of Mind, Malden, MA: Blackwells, pp. 227-242. Knight, R.: 2007, ‘Neural Networks Debunk Phrenology’, Science, 316, 1578-1579. Kondepudi, D. & Prigogine, I.: 1998, Modern Thermodynamics: From Heat Engines to Dissipative Structures, New York: Wiley. Lakatos, P.; Karmos, G.; Mehta, A.; Ulbert, I. & Schroeder, C.: 2008, ‘Entrainment of Neuronal Oscillations as a Mechanism of Attentional Selection, Science, 320, 110-113. Laughlin, R. & Pines, D.: 2000a, ‘The Theory of Everything’, Proceedings of the National Academy of Science of the USA, 97 (1), 28-31. Laughlin, R.: 2005, A Different Universe: Reinventing Physics from the Bottom Down, New York: Basic. Laughlin, R.; Pines, D.; Schmalian, J.; Stojkoviæ, B. & Wolynes, P.: 2000b, ‘The Middle Way’, Proceedings of the National Academy of Science of the USA, 97 (1), 32-37. Levina, A.; Herrmann, J. & Geisel, T.: 2007, ‘Dynamical synapses causing self-organized criticality in neural networks’, Nature Physics, 3 (Dec.), 857-860. Lewis, D.: 1991, Parts of Classes, Oxford: Blackwell. Mattingly, J.: 2003, ‘Gauge Theory and Chemical Structure’, in: J. Earley (ed), Chemical Explanation: Characteristics, Development, Autonomy (Annals of the New York Academy of Science, vol. 988), pp 193-202. McLaughlin, B. & Cohen, J. (eds.): 2007, Contemporary Debates in the Philosophy of Mind, Malden, MA: Blackwells. Merricks, T.: 2001, Objects and Persons, New York: Oxford UP. Newman, D.: 1996, ‘Emergence and Strange Attractors’, Philosophy of Science, 63, 245-261. Nida-Rümelin, M.: 2007, ‘Dualist Emergentism’: in: B. McLaughlin & J. Cohen (eds.), Contemporary Debates in the Philosophy of Mind, Malden, MA: Blackwells, pp. 269-286. Oppenheim, P. & Putnam, H.: 1958, ‘Unity of Science as a Working Hypothesis’, in: H. Feigl, M. Scriven & G. Maxwell (eds), Minnesota Studies in the Philosophy of Science, vol. 2, Minneapolis: University of Minnesota Press, pp. 3-36. Papineau, D.: 2001, ‘The Rise of Physicalism’, in: C. Gillett & B. Loewer (eds), Physicalism and Its Discontents, Cambridge: Cambridge UP, pp. 3-36. Peirce, C.: 1903/1997, Lectures on Pragmatism, Albany, State University of New York Press. Post, H.: 1971, ‘Correspondence, Invariance and Heuristics’, Studies in the History and Philosophy of Science, 2, 213-255. Putman, H.: 2005, Ethics without Ontology, Cambridge: Harvard UP. Schreiber, I. & Ross, J.: 2003, ‘Mechanism of Oscillatory Reactions Deduced from Bifurcation Diagrams’, Journal of Physical Chemistry A, 107, 9846-9859. Segal, G.: 2007, ‘Compositional Content and Propositional Attitude Attributions’ in: B. McLaughlin & J. Cohen (eds.), Contemporary Debates in the Philosophy of Mind, Malden, MA: Blackwells, pp. 5-19. Sperry, R.: 1986, ‘Macro- versus Micro-determination,’ Philosophy of Science, 53, 265-275. Stemwedel, J.: 2006, ‘Getting More with Less: Experimental Constraints and Stringent Tests of Mechanisms of Chemical Oscillators’, Philosophy of Science, 73 (5), 743-754. van Fraassen, B.: 2006, ‘Structure: Its Shadow and Substance’, The British Journal for the Philosophy of Science, 57, 275-307. Weyl, H.: 1949, Philosophy of Mathematics and Natural Science, Princeton: Princeton UP. Whitehead, A.N.: 1925/1967, Science and the Modern World, New York: Macmillian. Wittgenstein, L.: 1953/1969, On Certainty, ed. G. Anscombe & G. von Wright, Oxford: Blackwell. Joseph E. Earley, Sr.: |